Staer Warehouses: An Open Dataset for Physical AI Research

The 15 warehouse environments included in Staer Warehouses v0.1, from open staging areas to dense high-bay racking.

Today we're releasing v0.1 of an open synthetic warehouse dataset for physical AI research. It was built with NVIDIA Isaac Sim, and we believe it's the most densely annotated indoor industrial dataset currently available.

| Scenes | 15 warehouse environments |

| Total floor area | 78,400 m² (~14 football fields) |

| Object instances | ~200,000 individually placed 3D objects |

| Object categories | 78 distinct types |

| Sequences | 1,500 (100 per warehouse, with randomized changes) |

| Modalities | RGB, depth, semantic segmentation, instance segmentation |

| Resolution | 1920 x 1080 |

| 3D assets | 512 unique variants from 6 NVIDIA asset packs |

Every frame includes all four modalities, perfectly synchronized and noise-free.

This is v0.1. More warehouse types, more sequences, and additional modalities are coming. We built it because we needed it. We're releasing it because the field does too.

Why Warehouses Are Hard

Most 3D vision benchmarks feature environments that are, from a reconstruction standpoint, cooperative. ScanNet gives you living rooms with distinctive furniture. KITTI gives you streets with trees and parked cars. Each scene has enough visual variety to anchor feature matching and establish reliable correspondences.

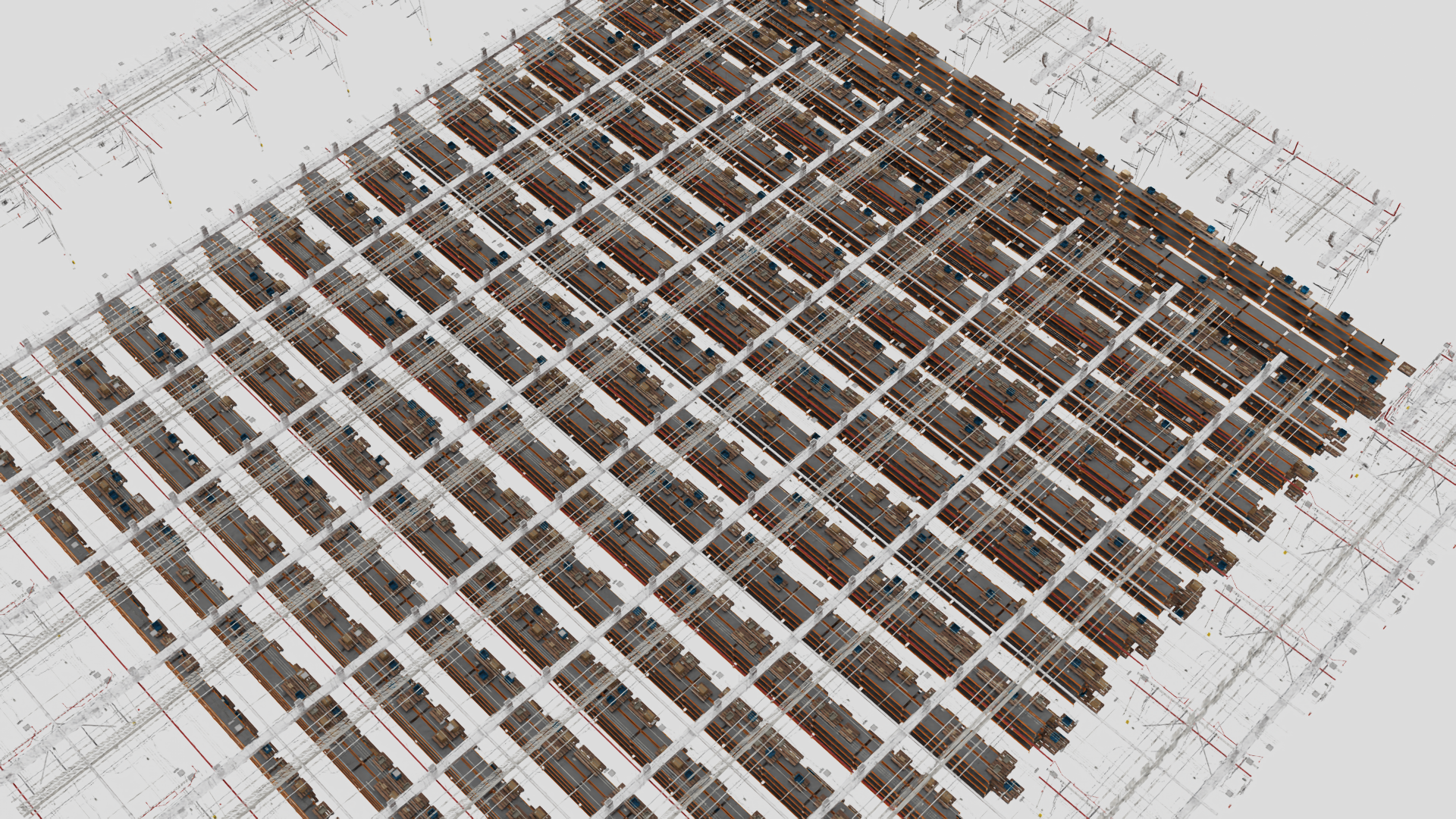

Identical racking extending hundreds of meters. Every aisle looks like every other aisle.

A warehouse is the opposite. Everything repeats by design. The racking is modular. The aisles are parallel. The shelves are identical. The boxes are brown. The floor is gray. Every visual cue that a reconstruction model relies on (texture, structure, distinctive geometry) is deliberately minimized in the name of operational efficiency.

This creates a specific failure mode for the current generation of feedforward 3D models. Architectures like VGGT, DUSt3R and its variants, and MapAnything produce impressive results on standard benchmarks, but struggle with the kind of aggressive visual repetition that defines industrial spaces. The model sees a rack, then another identical rack, and assumes they're the same structure viewed from a different angle. Aisles fold on top of each other. The geometry collapses quietly. No error, just a wrong map.

These aren't edge cases. Warehouses are among the primary environments where physical AI needs to operate. A robot navigating autonomously needs a spatial model that can distinguish "the same rack again" from "a different rack that looks exactly the same." Current benchmarks don't test for this.

What's in the Dataset

Every frame ships with four aligned modalities: RGB, semantic segmentation, instance segmentation, and dense depth.

Four modalities per frame, perfectly aligned:

- RGB at 1920×1080, ray-traced with physically based lighting

- Depth as 16-bit inverse depth maps, millimeter-accurate at every pixel, with no LiDAR artifacts or stereo failures

- Semantic segmentation across 78 object categories: racks, shelves, pallets, boxes, crates, barrels, drums, conveyors, forklifts, traffic cones, safety equipment, infrastructure, and more

- Instance segmentation with approximately 200,000 individually placed 3D objects across the dataset, where every rack beam, shelf, pallet, box, and infrastructure element has its own unique ID

The v0.1 release covers 15 scenes across 7 layout types drawn from real logistics operations:

| Scene | Type | Size | Objects |

|---|---|---|---|

| Block stacking | Floor pallets, drive aisles | 120 x 80m | ~14,600 |

| Pallet staging | Open floor operations | 100 x 100m | ~5,500 |

| High-bay | 9m tall racking | 100 x 120m | ~39,800 |

| E-commerce | Dense selective racking | 100 x 120m | ~50,800 |

| Receiving | Mixed-use with staging zones | 100 x 120m | ~60,200 |

| Sparse | Low-density selective | 80 x 80m | ~7,000 |

| Cross-aisle | Perpendicular rack zones | 80 x 80m | ~16,400 |

| Narrow corridor | Wall racks, tight passage | 10 x 100m | ~3,000 |

| Chemical | Hazmat drums and storage | 40 x 30m | ~300 |

| Conveyor | Sortation lines | 40 x 30m | ~300 |

| Loading dock | Dock operations | 40 x 30m | ~300 |

| Pack-and-ship | Packing stations | 40 x 30m | ~300 |

| Packing station | Workstation layout | 40 x 30m | ~300 |

| Mezzanine | Multi-level facility | 40 x 30m | ~300 |

| Mixed cargo | Varied goods handling | 40 x 30m | ~300 |

The procedural generation system can scale to arbitrary warehouse sizes. This is a single 100x100m scene generated from one preset and one seed.

Each warehouse includes 100 sequences with randomized operational changes between them: pallets moved, inventory shifted, loading states varied. This captures the kind of environmental drift that real warehouses undergo daily and that static datasets miss entirely. Camera trajectories follow serpentine walkthrough paths at human walking speed through the aisles, producing sequences of 500 to 2,000 frames per scene.

The generation system supports 31 preset configurations across 11 layout types, far more than what ships in v0.1. Future releases will expand the warehouse coverage, add new modalities, and increase sequence diversity.

200,000 Instances, Zero Annotators

The instance annotation density is worth highlighting because it's where synthetic data has a clear, practical advantage over real-world collection.

A densely packed warehouse scene with thousands of individually labeled objects across multiple shelf levels.

The 15 scenes contain approximately 200,000 individually placed 3D objects. A single scene like the receiving warehouse has over 60,000 objects: rack frames, shelves, pallets, boxes, crates, barrels, lights, and clutter. Each filled rack slot contains a pallet plus 6-7 goods items on average, across up to 9 shelf levels. Every instance ID is generated directly from the scene graph. No human annotator touches it.

For context:

| Dataset | Environment | Semantic classes | Instance scale | Depth |

|---|---|---|---|---|

| ScanNet | Apartments, offices | ~40 | ~hundreds/scene | Sensor (noisy) |

| Hypersim | Synthetic rooms | ~40 | ~hundreds/scene | Rendered (exact) |

| KITTI | Outdoor driving | ~30 | ~dozens/frame | LiDAR (sparse) |

| Staer Warehouses v0.1 | Industrial warehouses | 78 | ~200,000 total | Rendered (exact) |

Labeling a single warehouse frame at the instance level we provide (every beam, every shelf, every box, every pallet) would take a human annotator hours per frame.

This level of annotation density matters for training models that need to count, distinguish, and locate objects in cluttered environments. A warehouse shelf might hold thirty boxes that look nearly identical. An instance-level model needs to understand that these are thirty separate objects, not one textured surface. A semantic model needs to distinguish rack from shelf from pallet from box, even when they're all the same shade of brown.

Procedural Generation and Domain Randomization

The dataset is not a fixed collection. It's produced by a procedural generation system that supports controlled variation across:

- Layout: rack spacing, aisle width (2m–5m), rack height (3m–9m), fill density (40%–95%)

- Materials: clean concrete, stained, epoxy-coated floors; goods in new, worn, and mixed condition

- Lighting: 600–4,500 lux ambient, 36k–150k lux high-bay fixtures, per-scene variance

- Contents: pallets, boxes of multiple sizes, plastic crates, placed with seeded randomization for reproducibility

Every scene is deterministically seeded. The same seed produces the same warehouse, enabling controlled experiments.

Format and Access

An open staging area with mezzanine, traffic cones, and a forklift. Scenes range from empty facilities to fully packed high-bay racking.

The dataset uses EuRoC format, standard in the robotics community, with synchronized CSV timestamps per camera:

scene_name/

├── cam0/

│ ├── data/ # RGB frames

│ └── data.csv # timestamps

├── depth0/

│ ├── data/ # 16-bit inverse depth

│ └── data.csv

├── semantic0/

│ ├── data/ # semantic class labels

│ └── data.csv

└── instance0/

├── data/ # instance IDs

└── data.csv

RGB is available as H.265 video and individual PNGs. Depth, semantic, and instance channels use FFV1 lossless 16-bit encoding.

Synthetic Data in Context

Synthetic data is not a replacement for real-world captures. We've written about this before. Models trained purely on simulation can develop blind spots that only real-world exposure corrects.

But synthetic data has two strengths that are hard to replicate otherwise. First, for evaluation: when you want to measure whether a model handles repeating structures, you need ground truth that is unambiguous and an environment where the repetition is controlled. Real warehouses provide the repetition but not the ground truth. Lab datasets provide the ground truth but not the repetition. This dataset provides both.

Second, for pre-training and fine-tuning: a model that has only seen apartments and outdoor scenes will struggle in an industrial environment. Exposure to structured synthetic warehouses, with controlled variation in layout, lighting, and density, is a practical path to closing that gap before real-world deployment.

What We Hope Researchers Will Do With It

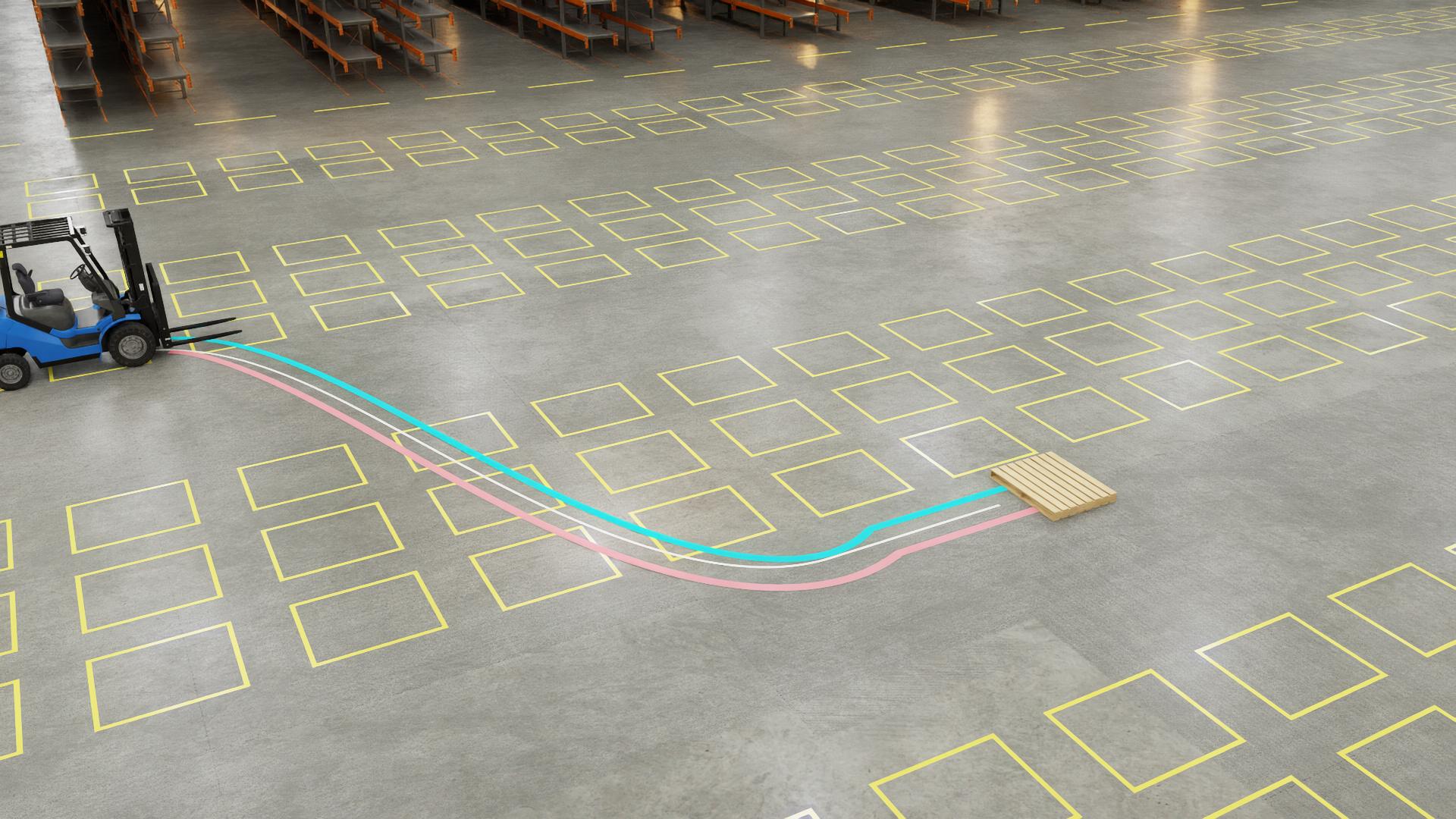

Beyond static datasets: these environments support dynamic scenarios like path planning, obstacle avoidance, and multi-agent simulation for physical AI testing.

This dataset is a starting point, not an endpoint. A few directions we think are particularly interesting:

- Benchmarking feedforward reconstruction models on environments with aggressive visual repetition. How do VGGT, DUSt3R, MASt3R, and similar architectures perform when every aisle looks the same?

- Instance-level understanding in dense, cluttered scenes. Can models learn to count and segment individual objects when thousands are present?

- Sim-to-real transfer for warehouse navigation. What domain gap remains after pre-training on procedurally generated industrial environments?

- Evaluation of mapping and localization systems that need to handle the repeating-pattern problem at scale

Physical AI models will need to work in industrial spaces. The benchmarks we use should reflect that. We hope this dataset helps move the field in that direction.

This is v0.1: 15 warehouses, 78,400 m², 200,000 object instances, 1,500 sequences. More is coming.